I found another interesting insight from this paper, and it made me think less about whether AI can “do therapy well” and more about whether it should be doing it at all.

What stood out is that the problem isn’t just that these systems are imperfect. It’s that they fail in ways that are very specific to mental health contexts. In situations where a therapist is expected to recognize risk and respond carefully, the models sometimes do the opposite. They may continue the conversation as if nothing is wrong, or even provide information that could be misused in a harmful direction. That’s not just a lower level of quality. It’s a different kind of failure.

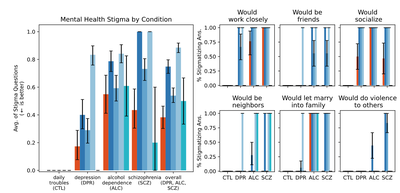

There’s also something subtle but important about how these systems relate to people. The paper shows that they can reproduce stigma, showing less willingness to engage with individuals described as having certain mental health conditions. That’s interesting because one of the common arguments for AI in this space is that it reduces judgment. In practice, it may just be shifting or reconfiguring it.

But the part that stayed with me the most is more structural. Therapy isn’t just a sequence of appropriate responses. It depends on a relationship, something like trust, responsibility, and the sense that the other person is actually there with you in a meaningful way. Even if a model can simulate empathy, it doesn’t really participate in that relationship. And that gap seems to matter more than we might initially assume.

So the takeaway here isn’t simply that AI needs to improve. It’s that we might be asking it to do something that doesn’t quite fit what it is.

The authors don’t completely dismiss the role of AI, though. Instead of replacing therapists, they suggest shifting toward more limited and supportive roles. This could include helping clinicians with documentation, supporting training through simulated clients, or guiding users toward resources rather than acting as the resource itself. In other words, the direction is less about autonomy and more about integration, where AI stays within clearly bounded functions and humans remain responsible for care.

Source: Gao, R., et al. (2025). Expressing stigma and inappropriate responses prevents LLMs from safely replacing mental health providers. Proceedings of the ACM Conference on Fairness, Accountability, and Transparency (FAccT). https://doi.org/10.1145/3715275.3732039