Lately, I have been trying to better understand how large language models are actually being used in research, beyond the usual hype. As part of that, I came across an interesting paper that looks at whether LLMs can function as actual research tools rather than just assistive writing aids. It was a surprisingly clean demonstration of what these models can realistically do.

What I found most interesting is how straightforward the setup was. The authors essentially treated GPT as a replacement for human annotators. They took large-scale text datasets such as tweets, news headlines, and Reddit comments that had already been manually coded for psychological constructs like sentiment, discrete emotions, offensiveness, and even moral foundations, and then asked GPT to perform the same classification tasks using simple prompts. No fine-tuning, no complex modeling pipeline. Just prompting.

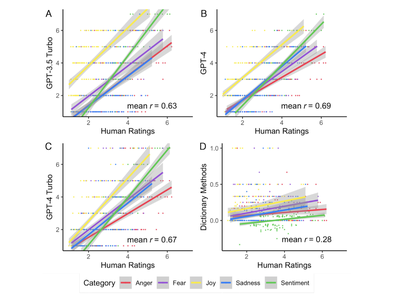

And it worked better than I expected. GPT showed fairly strong agreement with human annotations, reaching moderate to high correlations. Considering that human coding itself is not perfectly reliable, this level of agreement is already quite meaningful. What stood out even more is that GPT clearly outperformed traditional dictionary-based methods like LIWC, which are still widely used in psychology. That alone says a lot about how quickly the methodological landscape is shifting.

Another highlight is the multilingual aspect. One of the persistent limitations in computational social science is that methods often do not generalize well beyond English. In this study, GPT was applied across a wide range of languages, including less commonly studied ones, and still maintained reasonable performance. That opens up interesting possibilities for cross-cultural and non-WEIRD research, which has always been difficult to do at scale.

From a methods perspective, this paper reinforces something I have been thinking about. LLMs are not necessarily replacing researchers, but they are clearly reshaping the workflow. Tasks that used to require extensive human coding or large labeled datasets can now be done with relatively minimal setup. At the same time, there are still limitations. The model behaves like a black box, struggles more with complex or ambiguous constructs, and inevitably reflects biases present in its training data.

Overall, I found this paper interesting not because it claims that GPT is perfect, but because it shows a realistic use case. LLMs are already good enough to be used as a practical tool for text analysis, especially when working with large-scale or multilingual datasets. At the same time, they still require careful interpretation and methodological caution.

It has been quite interesting to see how the utility of LLMs is being explored across different domains. Papers like this make it clear that we are not just talking about AI as a future possibility anymore. It is already becoming part of everyday research practice, and that is likely where the more meaningful changes will happen.

Source: Rathje, S., Mirea, D.-M., Sucholutsky, I., Marjieh, R., Robertson, C. E., & Van Bavel, J. J. (2024). GPT is an effective tool for multilingual psychological text analysis. Proceedings of the National Academy of Sciences, 121(34), e2308950121. https://doi.org/10.1073/pnas.2308950121